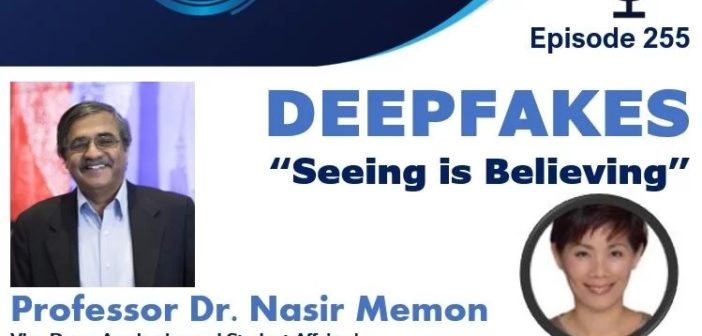

Professor Dr. Nasir Memon is Vice Dean for Academics and Student Affairs and a Professor of Computer Science and Engineering at the New York University (NYU) Tandon School of Engineering. He is a co-founder of NYU’s Center for Cyber Security (CCS) at New York as well as NYU Abu Dhabi.

He is an affiliate faculty at the Computer Science department in NYU’s Courant Institute of Mathematical Sciences, and department head of NYU Tandon Online. He introduced cyber security studies to NYU Tandon in 1999, making it one of the first schools to implement the program at the undergraduate level. He is the founder of the OSIRIS Lab, CSAW, The Bridge to Tandon Program as well as the Cyber Fellows program at NYU. He has received several best paper awards and awards for excellence in teaching. He has been on the editorial boards of several journals, and was the Editor-In-Chief of the IEEE Transactions on Information Security and Forensics.

In this podcast, Professor Memon traces the highlights in the evolution of AI technologies, and how breakthrough work in “deep” neural network powered by the rapid development in processing power led to the explosion of today’s “deepfakes”. Giving examples of convincing “deepfakes”, he also notes the emergence of “cheapfakes” and “shallowfakes”.

He points out the changing landscape as the prevalence of “deepfakes” grows, including the development of “deepfake-as-a-service” and other monetization opportunities for threat actors, and the potential of misinformation being weaponised by nation state actors as “deepfakes” are added to their cyber attacks arsenal.

As the world of “deepfakes” creation and detection becomes a cat-and-mouse game, he stresses the need for going beyond passive detection to a proactive approach of embedding integrity measures for image provenance.

With truth under attack as “deepfakes” technologies grow more sophisticated, he also sees the need for a societal shift towards becoming more sceptical in general and advises us: “do not jump into conclusions, look for corroborative evidence”.

Recorded Singapore 17th March 2021 7.15am / New York 16th March 2021 7.15pm.